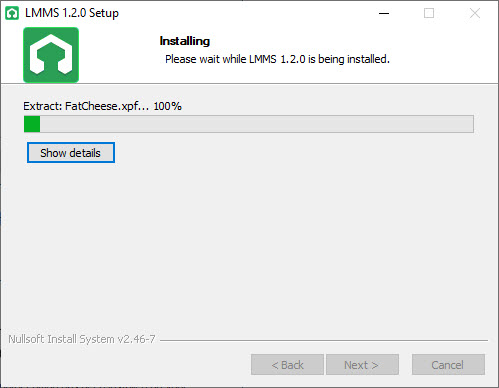

My top rated "in class similar to Nexus, Vanguard and X3TA+" but for free:ĬlubVoltage1 is a modern control-voltage 32 step sequencer synth, based on subtractive synthesis. This is vst plugins I have been using for a long time and some might be new for others and thats why I want to share my list. I will update this list after I've tested more and find more alternatives. Let x is called the probability vector.Tested in LMMS 1.2.2. The authors considered a toy statistical model of an LLM solving multiple-choice questions, and showed that this statistical model, modified to account for other types of tasks, applies to these tasks as well. argue that the emergent abilities are not unpredictably acquired, but predictably acquired according to a smooth scaling law. Examples include multi-step arithmetic, taking college-level exams, identifying the intended meaning of a word, chain-of-thought prompting, decoding the International Phonetic Alphabet, unscrambling a word’s letters, identifying offensive content in paragraphs of Hinglish (a combination of Hindi and English), and generating a similar English equivalent of Kiswahili proverbs. Hundreds of emergent abilities have been described. These abilities are discovered rather than programmed-in or designed, in some cases only after the LLM has been publicly deployed.

Researchers note that such abilities often "cannot be predicted simply by extrapolating the performance of smaller models". These are often referred to as "emergent abilities", and have been the subject of substantial study. While it is generally the case that performance of large models on various tasks can be extrapolated based on the performance of similar smaller models, sometimes " breaks" in downstream scaling laws occur such that larger models suddenly acquire substantial abilities at a different rate than in smaller models. These are examples of emergent abilities. On a number of natural language benchmarks involving tasks such as question answering, models perform no better than random chance until they reach a certain scale (in this case, measured by training computation), at which point their performance sharply increases. In addition, large language models demonstrate considerable general knowledge about the world, and are able to "memorize" a great quantity of facts during training. Though trained on simple tasks along the lines of predicting the next word in a sentence, neural language models with sufficient training and parameter counts are found to capture much of the syntax and semantics of human language. The skill with which they accomplish tasks, and the range of tasks at which they are capable, seems to be a function of the amount of resources (data, parameter-size, computing power) devoted to them, in a way that is not dependent on additional breakthroughs in design. LLMs are general purpose models which excel at a wide range of tasks, as opposed to being trained for one specific task (such as sentiment analysis, named entity recognition, or mathematical reasoning). Though the term large language model has no formal definition, it often refers to deep learning models with millions or even billions of parameters, that have been "pre-trained" on a large corpus. This has shifted the focus of natural language processing research away from the previous paradigm of training specialized supervised models for specific tasks. LLMs emerged around 2018 and perform well at a wide variety of tasks. A large language model ( LLM) is a computerized language model consisting of an artificial neural network with many parameters (tens of millions to billions), trained on large quantities of unlabeled text using self-supervised learning or semi-supervised learning.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed